A few weeks ago, I was coaching a founder through a difficult hiring decision. He’d already asked ChatGPT about it.

“It gave me a solid answer,” he told me. “But something felt off.”

I asked him three questions. His whole framing shifted. The answer he needed was completely different from the one he got.

The AI wasn’t wrong, exactly. It just didn’t know what it didn’t know.

The Assumption Nobody Talks About

Every time you ask an AI a question, it makes a quiet assumption before it says a single word.

It assumes it understood you well enough to answer.

Not partially understood. Not probably understood. Fully understood — well enough to generate a confident, fluent response right now.

That’s a dangerous assumption. Especially when the person asking has a specific situation, a unique history, and context that lives entirely in their head.

A real expert doesn’t do this. A real expert asks questions first. They probe. They clarify. They say “tell me more about that” before they commit to a direction.

An AI that skips that step isn’t being helpful. It’s being efficient at the wrong thing.

What We Built Instead

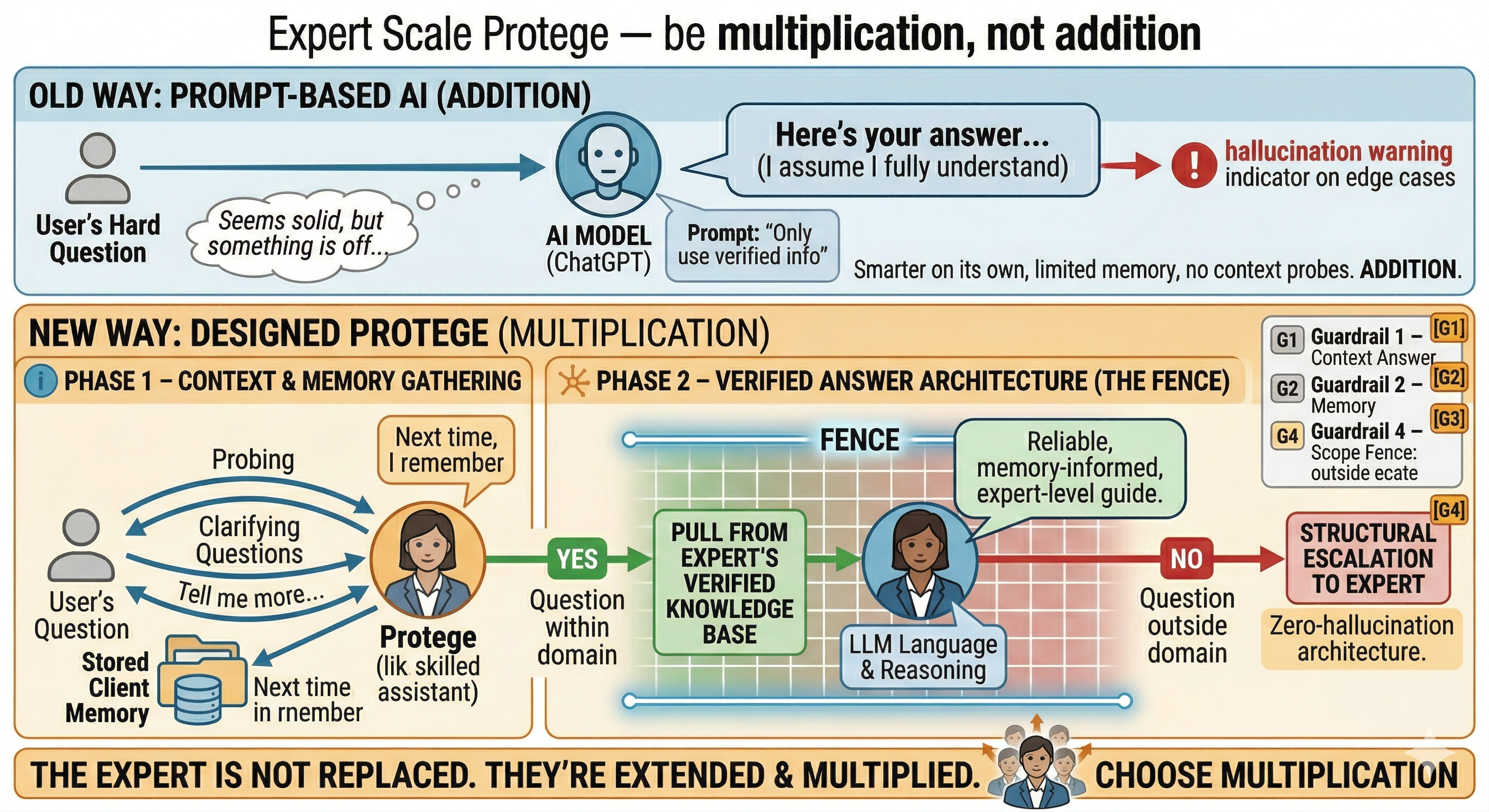

When we designed Expert Scale proteges, we made a deliberate choice to break that pattern.

Proteges do two things a standard AI won’t do.

First, they ask. Before answering anything substantive, they gather context. They ask follow-up questions the way a real expert would — not interrogation, just calibration. Understanding the situation before offering a perspective on it.

Second, they remember. Every conversation a protege has with a client is stored. The next time that client shows up, the protege already knows their history, their challenges, the decisions they’ve already tried. It’s not starting from scratch. It’s building on a relationship.

That’s not prompt engineering. That’s how expertise actually works.

The Fence

Here’s where the architecture gets important.

When a protege does answer, it’s not reaching into the open internet or pulling from everything an LLM was ever trained on. It’s pulling from the expert’s verified knowledge base first.

That knowledge base is built by the expert themselves. Their frameworks. Their methodologies. Their hard-won experience. Content they’ve reviewed, curated, and confirmed.

The LLM doesn’t replace that. It works inside it.

Think of the knowledge base as a fence. The LLM operates within that fence — it can use language and reasoning to communicate the expert’s ideas more fluidly. But it can’t wander outside the boundary. It can’t invent positions the expert never held. It can’t generate confident-sounding advice on topics the expert’s KB doesn’t cover.

If someone asks something that falls outside the fence? The protege doesn’t guess. It escalates to the expert.

That’s the zero-hallucination architecture. Not a prompt that says “don’t make things up.” A structural constraint that makes making things up impossible.

This Isn’t a Prompt. It’s a Design Decision.

I want to be clear about something, because I see this confused constantly.

Telling an LLM to “only use verified information” is not the same as building a system where it structurally can’t do anything else.

One is a suggestion. The other is architecture.

The difference shows up in edge cases. In the 2 AM question nobody anticipated. In the client who asks about something just slightly outside the expert’s stated domain. A prompted system drifts. A designed system holds.

We built for the edge cases. Because that’s where trust gets lost.

The Expert Isn’t Replaced. They’re Extended.

Here’s what surprised me most as we built this.

The experts who’ve put their knowledge into the platform report feeling more confident in what their protege says — not less. Because they know the fence is real. They built it themselves.

And clients get something they genuinely couldn’t access before: expert-level guidance at 2 AM, consistent with everything the expert actually believes, that remembers the last conversation and asks better questions before diving into answers.

The expert’s knowledge isn’t diluted. It’s multiplied.

That’s the bet we made when we built Expert Scale. Not that AI is smarter than experts. But that the right architecture can make an expert’s knowledge available — reliably, honestly, at scale — in a way that no amount of prompt engineering ever will.

Addition vs. multiplication.

I chose multiplication.

Want to see how it works?

Start a conversation with my Digital Protege — it’s built exactly the way I described. First session is free.